The threat model shift

A traditional LLM is stateless and passive. It receives a prompt, returns a response. The attack surface is limited to the output; hallucinations, biased content, prompt injection through the input.

An agent is different. It has tools. It reads files, calls APIs, executes code, browses the web, spawns sub-processes. Every capability that makes agents useful also expands the blast radius when something goes wrong whether through a compromised prompt, a misconfigured tool, or a manipulated input from an external source.

The question is no longer “what does the model say” but “what can the model do and to what.”

Blast radius - the core concept

Blast radius is the scope of damage an agent can cause if compromised or manipulated.

Without containment, a single successful prompt injection such as hidden in a malicious email, a crafted file, or a poisoned web page can pivot into full infrastructure access. The agent reads a document containing hidden instructions, interprets them as legitimate commands, and begins executing actions it was never meant to take.

With proper isolation, that same attack hits a wall. The agent is contained. What it can reach, write, and call is structurally limited, not by the model’s judgment, but by the architecture beneath it.

| Without sandbox | With sandbox | |

|---|---|---|

| Production DB | Agent ──► Production DB | Agent ──► [BLOCKED] |

| Secrets store | Agent ──► Secrets store | Agent ──► [BLOCKED] |

| Internal network | Agent ──► Internal network | Agent ──► Egress gate ──► allowlist only |

| Code execution | Agent ──► Arbitrary exec | Agent ──► Scoped tool runtime |

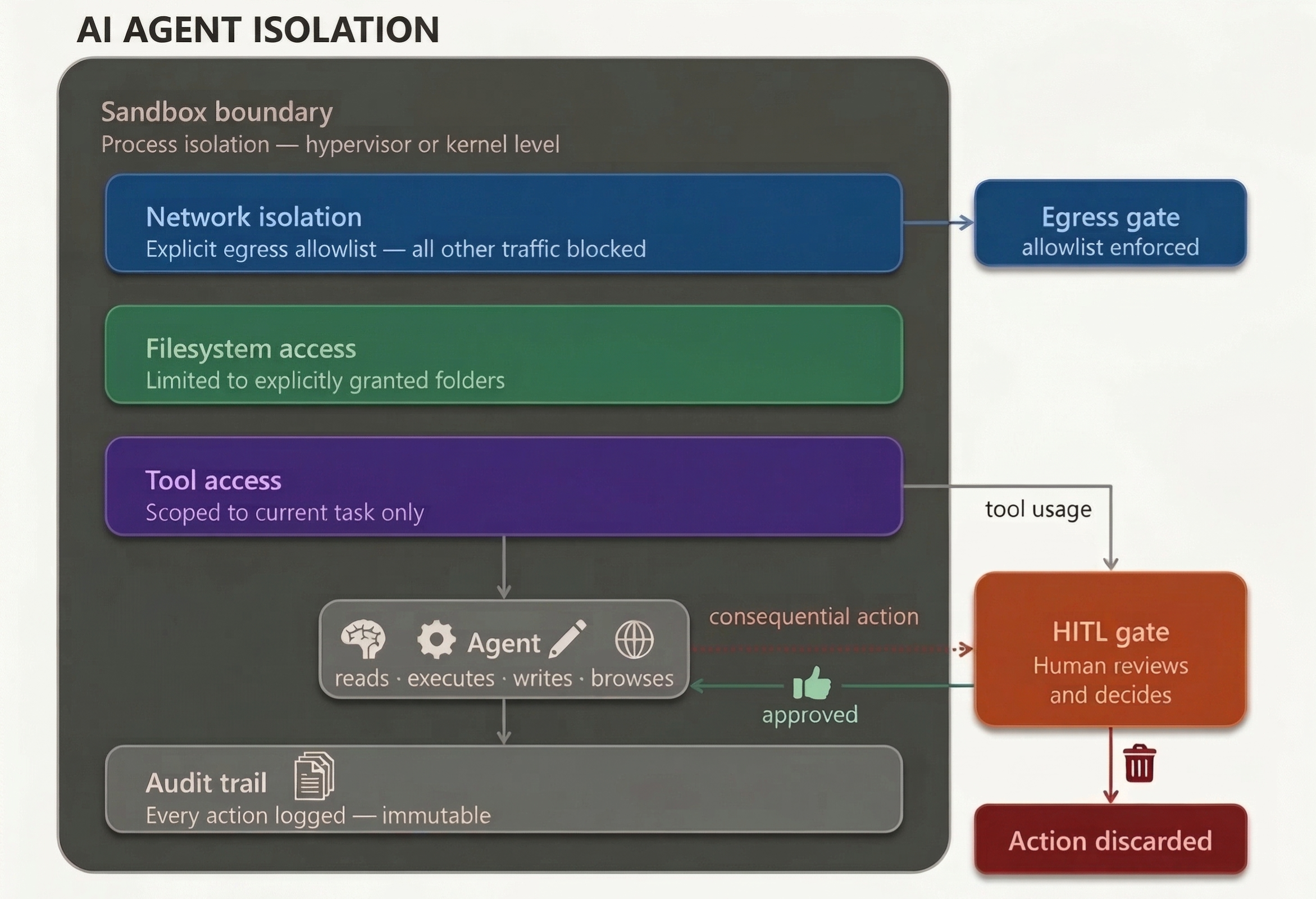

The four controls

A properly designed sandbox enforces four distinct controls. These are not configuration options, they are architectural guarantees.

1. Network isolation with an explicit egress allowlist

The agent runtime has no direct route to the internet, production systems, or internal network by default. All outbound traffic is routed through a single egress gate that enforces an explicit allowlist.

Deny-all by default. Only endpoints explicitly listed are reachable. Everything else is blocked and logged.

Example allowlist (NemoClaw / OpenShell policy YAML):

network:

egress:

deny: all

allow:

- api.anthropic.com

- pypi.org

- registry.npmjs.org

This is the control that stops lateral movement and data exfiltration cold. Even if the agent is manipulated into attempting an outbound call to an attacker-controlled server, the egress gate blocks it at the network layer before any data leaves.

2. Filesystem access limited to explicitly granted folders

The agent operates on a mount-point basis. It sees only the folders you explicitly grant access to. The host filesystem, secrets directories, environment files, and system paths are invisible to the agent by default.

This means:

- No access to

~/.ssh,~/.aws,.envfiles, or credential stores - No traversal outside the granted scope

- Write access limited to designated output directories

3. Tool access scoped to the current task

The agent is provisioned with only the tools required for the current task. A research agent has web search. A document agent has file read/write. Neither has shell access, database write access, or email unless explicitly required.

This is least-privilege applied at the tool level. Granting a full capability set “just in case” is how blast radii expand.

| Task | Tools | HITL |

|---|---|---|

| research | web_search, read_file | |

| code review | read_file, run_tests | |

| report gen | read_file, write_file | |

| deployment | deploy_api | ✓ |

4. Process isolation at hypervisor or kernel level

The execution environment is isolated at the OS level, not just in application code.

Two approaches exist:

Hypervisor-level (VM): The agent runs inside a dedicated virtual machine with a custom root filesystem. The VM is ephemeral, booted at task start, wiped on exit. Compromise is contained within the VM boundary. Nothing persists to the host.

Kernel-level: The agent process runs on the host kernel but is constrained by kernel-enforced policies. Syscalls are filtered, filesystem paths are restricted and network access is namespaced. Less overhead than a full VM, with narrower but enforceable boundaries.

| Hypervisor isolation | Kernel-level isolation |

|---|---|

| Full VM (custom Linux) | seccomp (syscall filter) |

| Ephemeral rootfs | Landlock (path enforcement) |

| Mount-point access only | Network namespace |

| Host is invisible | Policy YAML enforcement |

| Wiped on session end | Persistent state across sessions |

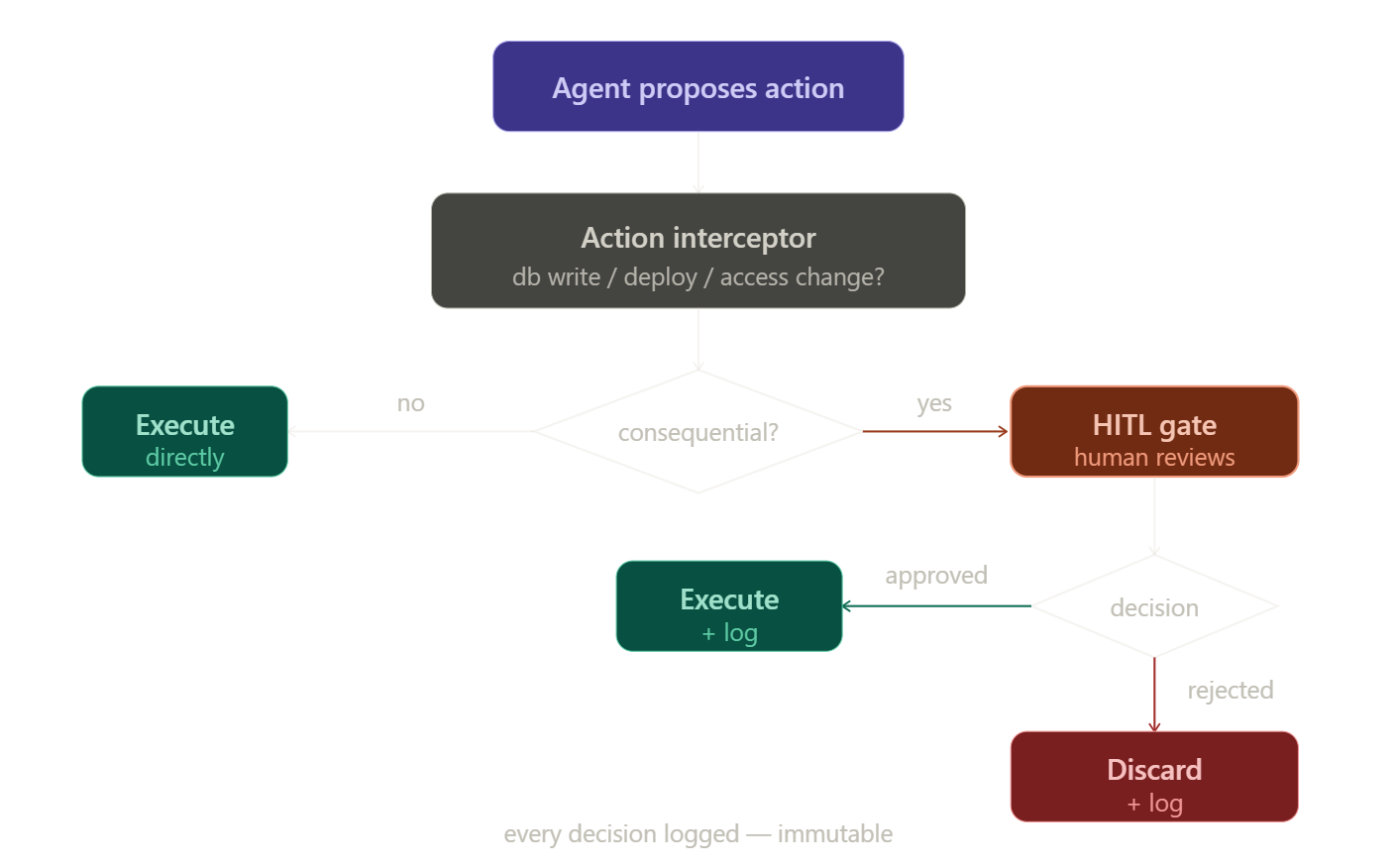

The HITL gate - where isolation meets governance

The sandbox limits what the agent can reach. But for actions with real-world consequences like database writes, deployments, access control changes, the containment alone is insufficient.

These actions require an explicit human approval step before execution.

This is not a UX choice. It is a security control.

An agent that can autonomously execute irreversible actions at scale is a force multiplier for both productivity and harm. The HITL gate ensures that speed does not come at the cost of auditability and accountability.

How it works

Every decision : approve or reject, is logged immutably. The audit trail is not optional.

Implementation examples

Anthropic - Claude Cowork

Cowork applies the sandbox model at the desktop level, targeting knowledge workers without requiring terminal access.

Isolation mechanism:

- macOS: Apple Virtualization Framework (VZVirtualMachine); it’s a full Linux VM

- Windows: Microsoft Host Compute System; equivalent VM isolation

- Custom Linux root filesystem, ephemeral per session

Controls in practice:

- Network access configured via allowlist in app Settings

- Filesystem access limited to explicitly mounted folders

- Session ends → VM wiped, no persistent state (excepts if using projects)

- Claude surfaces a confirmation prompt before any destructive action (file deletion, significant writes) — the user approves or rejects before execution proceeds

Current limitation:

- Research preview: prompt injection vulnerability disclosed at launch (white-on-white text in documents); avoid sensitive or regulated data

NVIDIA - NemoClaw

NemoClaw is an open source stack for deploying always-on autonomous agents, built on top of OpenClaw with enterprise-grade security via the OpenShell runtime.

Isolation mechanism:

- Kernel-level: Landlock (filesystem), seccomp (syscall filter), network namespace

- Declarative policy YAML — every constraint is explicit and auditable

Controls in practice:

- Network egress governed by

openclaw-sandbox.yaml: deny-all default, explicit allow entries - Filesystem constraints enforced at kernel level: agent cannot access paths outside policy

- Every action intercepted by OpenShell before execution: if not in policy, blocked and surfaced

- Policy updated by operator from outside the sandbox: agent cannot modify its own constraints

Current limitations:

- Persistent state by design: always-on agents maintain memory across sessions (this is a feature, not a bug, but it means the “ephemeral filesystem” control does not apply)

- Early preview: rough edges expected, not production-ready

What is not covered

Two controls that are frequently cited but not fully implemented in either tool today:

Indirect prompt injection detection. When an agent reads untrusted content like a web page, an email, a document, that content arrives in the same context window as the agent’s instructions. A malicious actor can embed instructions in that content to manipulate the agent. Detection requires a separate model or classifier inspecting content before it reaches the agent. This is what NeMo Guardrails addresses but it is a separate layer from the sandbox, not part of Cowork or NemoClaw directly.

Inter-agent trust boundaries. In multi-agent architectures, a compromised sub-agent can return malicious results to an orchestrator that executes them with higher privileges. Sandbox isolation at the individual agent level does not address propagation across agent boundaries. This remains an open problem in production agentic systems.

Key takeaways

The sandbox is not a feature you bolt on after deployment. It is the architectural foundation that makes agentic AI defensible in production.

The four controls: network isolation, filesystem scope, tool access, process isolation, address the structural risks introduced by agents that can act. The HITL gate addresses the governance risk of agents that can cause irreversible consequences.

No unique tools covers every control completely today. Both represent serious engineering toward the right architecture. The gap between where they are and where production-grade isolation needs to be is the work that remains.

Moving to agentic AI is the right direction. Building the containment layer first is not optional.

Sources

Claude Cowork

- https://claude.com/product/cowork

- https://support.claude.com/en/articles/13345190-get-started-with-cowork

- https://simonw.substack.com/p/first-impressions-of-claude-cowork

- https://tallyfy.com/claude-cowork-review

- https://venturebeat.com/technology/anthropic-launches-cowork-a-claude-desktop-agent-that-works-in-your-files-no

NVIDIA NemoClaw

- https://nvidianews.nvidia.com/news/nvidia-announces-nemoclaw

- https://developer.nvidia.com/blog/run-autonomous-self-evolving-agents-more-safely-with-nvidia-openshell

- https://www.nvidia.com/en-us/ai/nemoclaw

- https://github.com/NVIDIA/NemoClaw

- https://techcrunch.com/2026/03/16/nvidias-version-of-openclaw-could-solve-its-biggest-problem-security

- https://www.cio.com/article/4146545/nvidia-nemoclaw-promises-to-run-openclaw-agents-securely

NeMo Guardrails